Notification spam ruins social networks, diluting the real human interaction. Desperate to gain an audience, users pay services to rapidly follow and unfollow tons of people in hopes that some will follow them back. The services can either automate this process or provide tools for users to generate this spam themselves, Earlier this month, a TechCrunch investigation found over two dozen follow-spam companies were paying Instagram to run ads for them. Instagram banned all the services in response an vowed to hunt down similar ones more aggressively.

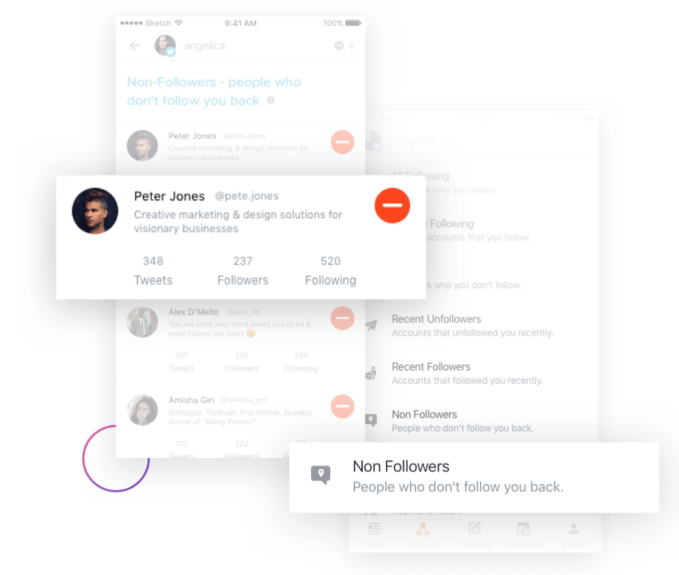

ManageFlitter’s spammy follow/unfollow tools

Today, Twitter is stepping up its fight against notification spammers. Earlier today, the functionality of three of these services — ManageFlitter, Statusbrew, Crowdfire — ceased to function, as spotted by social media consultant Matt Navarra.

TechCrunch inquired with Twitter about whether it had enforced its policy against those companies. A spokesperson provided this comment: “We have suspended these three apps for having repeatedly violated our API rules related to aggressive following & follow churn. As a part of our commitment to building a healthy service, we remain focused on rapidly curbing spam and abuse originating from use of Twitter’s APIs.” These apps will cease to function since they’ll no longer be able to programatically interact with Twitter to follow or unfollow people or take other actions.

Twitter’s policies specify that “Aggressive following (Accounts who follow or unfollow Twitter accounts in a bulk, aggressive, or indiscriminate manner) is a violation of the Twitter Rules.” This is to prevent a ‘tragedy of the commons’ situation. These services and their customers exploit Twitter’s platform, worsening the experience of everyone else to grow these customers’ follower counts. We dug into these three apps and found they each promoted features designed to help their customers spam Twitter users.

ManageFlitter‘s site promotes how “Following relevant people on Twitter is a great way to gain new followers. Find people who are interested in similar topics, follow them and often they will follow you back.” For $12 to $49 per month, customers can use this feature shown in the GIF above to rapidly follow others, while another feature lets them check back a few days later and rapidly unfollow everyone who didn’t follow them back.

Crowdfire had already gotten in trouble with Twitter for offering a prohibited auto-DM feature and tools specifically for generating follow notifications. Yep it only changed its functionality to dip just beneath the rate limits Twitter imposes. It seems it preferred charging users up to $75 per month to abuse the Twitter ecosystem than accept that what it was doing was wrong.

StatusBrew details how “Many a time when you follow users, they do not follow back . . . thereby, you might want to disconnect with such users after let’s say 7 days. Under ‘Cleanup Suggestion’ we give you a reverse sorted list of the people who’re Not Following Back”. It charges $25 to $416 month for these spam tools. After losing its API access today, StatusBrew posted a confusing half-mea culpa, half-“it was our customers’ fault” blog post announcing it will shut down its follow/unfollow features.

Twitter tells TechCrunch it will allow these companies “apply for a new developer account and register a new, compliant app” but the existing apps will remain suspended. I think they deserve an additional time-out period. But still, this is a good step towards Twitter protecting the health of conversation on its platform from greedy spam services. I’d urge the company to also work to prevent companies and sketchy individuals from selling fake followers or follow/unfollow spam via Twitter ads or tweets.

When you can’t trust that someone who follows you is real, the notifications become meaningless distractions, faith in finding real connection sinks, and we become skeptical of the whole app. It’s the users that lose, so it’s the platforms’ responsibility to play referee.

from Social – TechCrunch https://techcrunch.com/2019/01/31/dont-buy-twitter-followers/

via Superb Summers